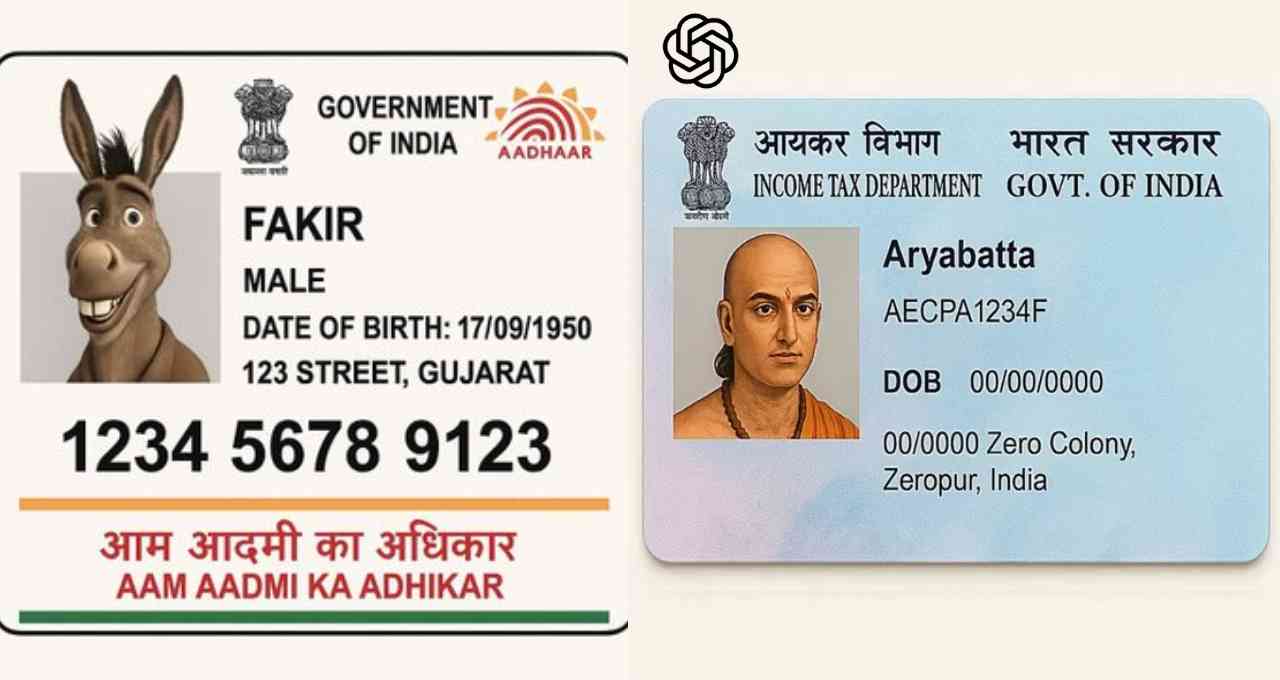

ChatGPT, once hailed as a significant achievement in the field of Artificial Intelligence (AI), is increasingly becoming a source of serious cyber threats. Recently, several social media users claimed that OpenAI's GPT-4 model is capable of generating fake Aadhaar and PAN cards. Some users even demonstrated on X (formerly Twitter) how they created fake documents using only their name, date of birth, and address. These incidents raise serious concerns about privacy and data security.

Fake Documents Go Viral on Social Media, Users Express Concerns

Users' experiences with GPT-4's capabilities are alarming. One user, Yashwant Sai Palaghat, wrote, "ChatGPT is instantly generating fake Aadhaar and PAN cards, which is a serious security risk." Another user, Piku, stated, "A perfect replica of an Aadhaar card was created just by giving the name and DOB. The question is, who is providing AI companies with such precise formatting information?"

Images shared with these claims showed that the forged IDs appear remarkably authentic, increasing the potential for fraud. Although the numbers on these cards do not match any real documents, the accuracy of their design and layout is a significant warning sign.

Questions Raised About the Formatting of Government Identity Cards

These incidents raise questions about how AI companies gained access to the format and layout of Aadhaar and PAN cards. Was there a data leak, or did the AI learn this from publicly available information? Currently, genuine Aadhaar cards have security layers such as secure QR codes, holograms, and digital signatures that distinguish them from fakes. However, the ability to generate such realistic-looking IDs without verification makes misuse incredibly easy.

Demand for AI Regulation Intensifies, Need to Expand Legal Scope

Following this new AI-related threat, experts and users alike are demanding AI regulation. Stricter rules and oversight are necessary to prevent data privacy breaches, fake document creation, and cybercrime. Controlling the power of AI tools like ChatGPT and GPT-4 is no longer just a technological responsibility but also a social and legal one. Failure to address these issues promptly could make this technology the biggest weapon for fraud in the future.